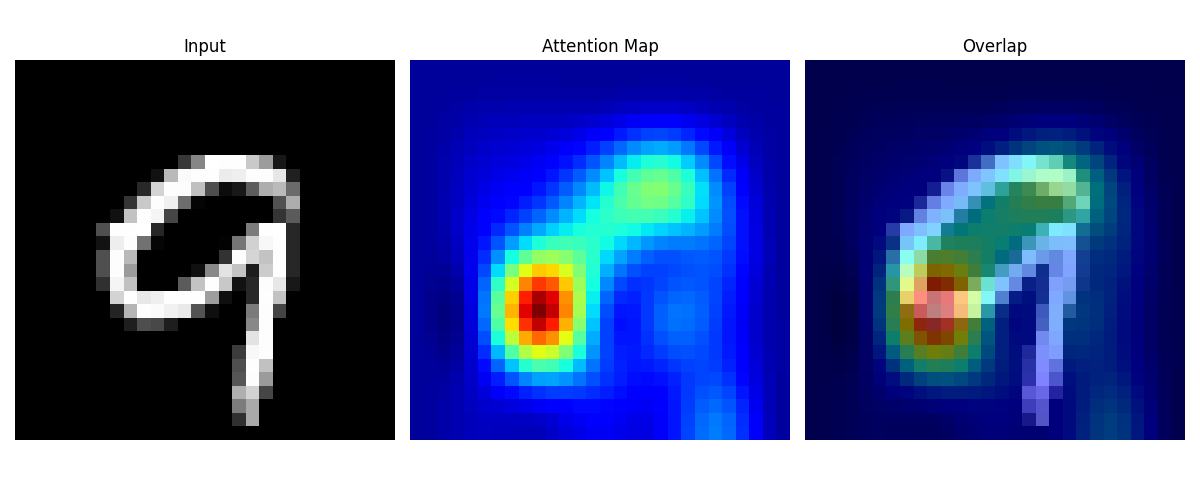

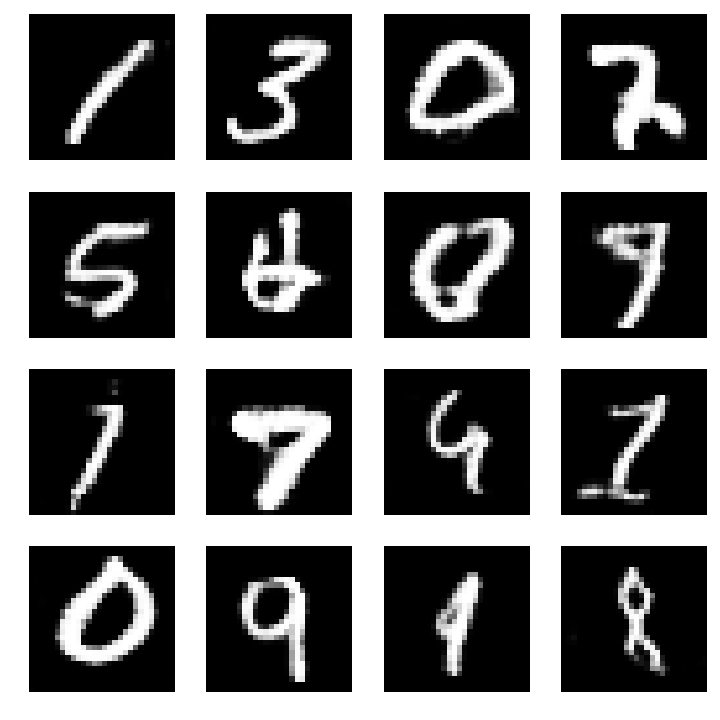

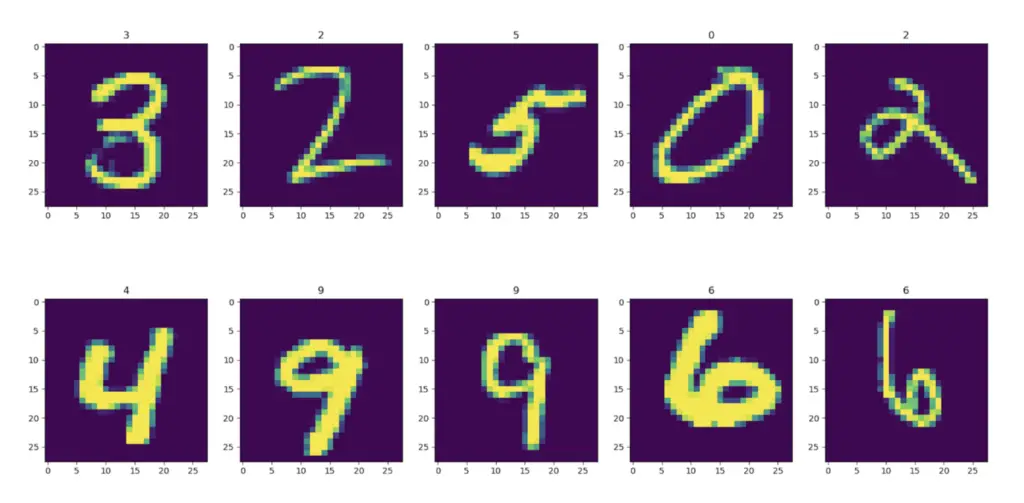

This insight allows us to detect if some neurons are redundant. Then we can identify similar behaviors among neurons in the convolutional neural networks. By visualizing activations, we are able to see how neurons respond to the inputs.

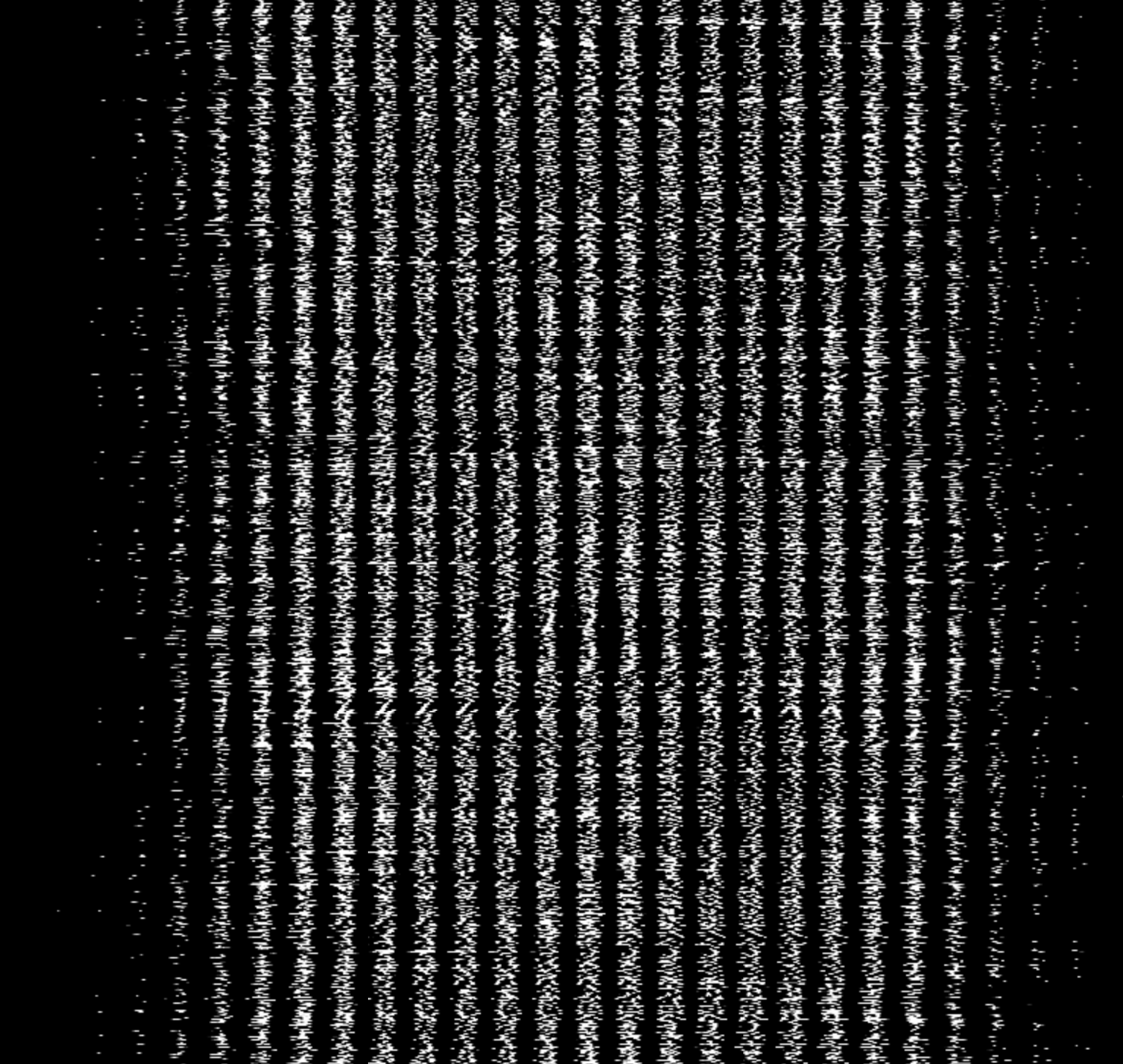

If the learning goes wrong with gradient vanishing or explosion problems, we can quickly see connections stop changing at some points. By visualizing the dynamics of weights, we are able see how connections are changing during training. Here we have the two elements that we want to visualize: activations and weights. These activations trigger some losses that go backward layer by layer to provide the gradient that we can use to adjust the connections between neurons, which are represented by the weights. In the feedforward pass, the input signals activate neurons layer by layer and the activations a.k.a feature maps, of each layer are finally transformed to the last fully connected layers (we would use the term activation and feature map interchangeably for the rest of this paper). Then we need to take a close look at the energy that drives a convolutional neural networks in their learning and predicting. Such dynamics function like energy that drives particles around so we often see physics terminologies like acceleration, gradient descent, or momentum in the deep learning communities. Data flows inside neural networks that eventually changes the connections between neurons. We consider neural networks are similar to scientific simulation systems and the learning and predicting process are analogous to the dynamics inside a large simulation system. But with overly increased depth, not only the time complexity greatly increases,but also the accuracy may even saturates or drops Deeper and larger networks are in demand for solving modern problems. Sometimes, deep neural networks stop to learn because of the vanishing gradient or gradient explosion problem. These hyper-parameters are so important that they affect the performance of networks. Researchers need to test different architectures and hyper-parameters such as learning rates, optimizers, batch sizes, etc. The second fold of challenge is the unavailable of general and efficient predefined architecture for same type of problems. Normally training the above mentioned convolutional neural networks need several weeks. The total number of trainable parameters (431k in LeNet-5, 61M in AlexNet) is big enough to understand the amount of computation that incurs. The first fold is the extreme large amount of computation during the training process. Training a deep convolutional neural network is slow by two folds of challenges. Very large CNNs, like AlexNet, VGGNet, ResNet, contain dozens to hundreds of layers. They are trained for vision and language recognitions and many other domains such as drug discovery and genomics. READ FULL TEXT VIEW PDFĭeep Convolutional Neural Networks (CNNs) are very complex systems. LeNet-5 and VGG16 using in situ TensorView. Visualization can provide guidance to adjust the architecture of networks, orĬompress the pre-trained networks.

Only a small number of lines of codes are injected in TensorFlow framework.

This avoid heavy I/O overhead for visualizing large dynamic systems. Paraview to provide real-time visualization during training and predicting It leverages the capability of co-processing from

In situ TensorView is a loosely coupled in situ visualization openįramework that provides multiple viewers to help users to visualize and The training and functioning of CNNs as if they are systems of scientific We present in situ TensorView to visualize Simulations, visualization tools like Paraview have long been utilized to Networks are learning and making predictions. It is both interesting and helpful to visualize the dynamics within such deepĪrtificial neural networks so that people can understand how these artificial They can adapt their internal connections to recognize images, texts and more. Convolutional Neural Networks(CNNs) are complex systems.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed